AI Choreographer

Music Conditioned 3D Dance Generation with AIST++

1University of Southern California 2Google Research 3UC Berkeley

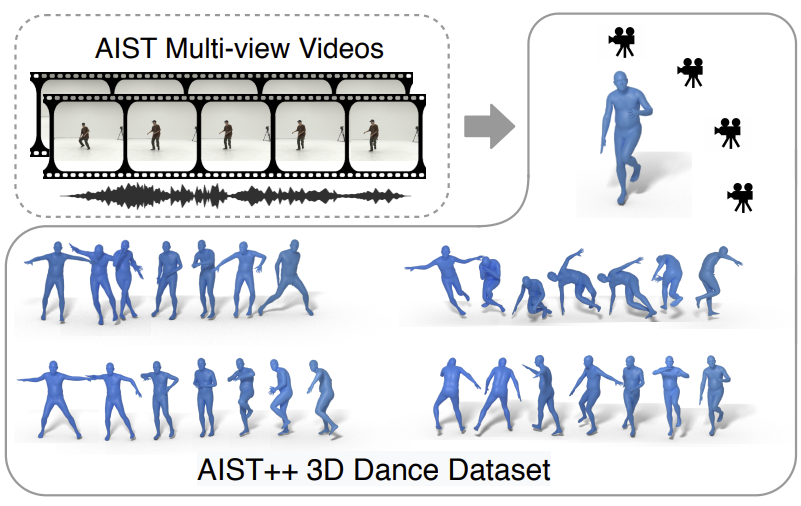

We present a crossmodal transformer-based architecture (FACT) model and a new 3D dance dataset AIST++, which contains 3D motion reconstructed from real dancers paired with music (left). Our model generates realistic smooth dance motion in 3D with full translation, which allow applications such as automatic motion retargeting to a novel character (right).

Paper Overview

Dance Generation Results

Here we show sampled dance motions generated using our proposed model. Given a 2 second seed motion sequence and a piece of music, our model can generate long-range non-freezing dance motion following the music rhythm. We demonstrate our generated dance motions using the avatars from Mixamo.

AIST++ 3D Dance Motion Dataset

In order to train our model, we introduce AIST++, a large-scale 3D human dance motion dataset, which contains a wide variety of 3D motion paired with music It is built upon the AIST Dance Database, which is an uncalibrated multi-view collection of dance videos. AIST++ dataset is designed to serve as a benchmark for both motion generation and prediction tasks. It can also potentially benefit other tasks such as the 2D/3D human pose estimation. To our knowledge, AIST++ is the largest 3D human dance dataset with 1408 sequences, 30 subjects and 10 dance genres with basic and advanced choreographies. It also covers over 18k seconds motion data with over 10M corresponding images. For more info about this dataset, please visit the dataset website.

Bibtex

More Thanks

We thank Chen Sun, Austin Myers, Bryan Seybold and Abhijit Kundu for helpful discussions. We thank Emre Aksan and Jiaman Li for sharing us the baseline code bases. We also thank Kevin Murphy for the early attempts on this direction, as well as Peggy Chi and Pan Chen for the help on user study experiments. We thank Jordi Pont-Tuset for sharing us the dataset webpage template. And we thank Shunsuke Saito for letting us know about this amazing dataset. Besides, we also would like to thank the people who build and release the amazing AIST Dance Video Dataset. This website is inspired by the template of pixelnerf.

Learn To Dance assets are Copyright 2021 Google LLC, licensed under the CC-BY 4.0 license.